Having large or multiple PDF files, both are difficult to manage. In this article, I will discuss about how to split PDF file into multiple smaller parts and merge multiple PDF files into a single file at anytime from anyplace without affecting the content and file structure.

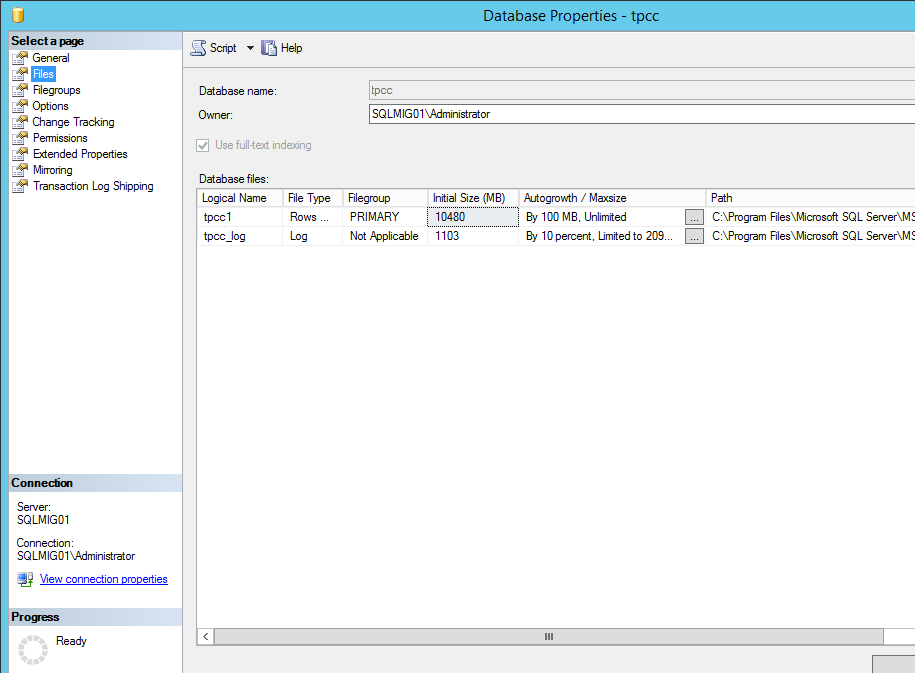

Often times, we come across a physical or virtual machine that has multiple drives on the same disk. It is common that the left partition is the C drive and it has run out of space, or we want the drives separated when we convert the machine from a physical machine to a virtual machine so that we have more flexibility.

Using the VMWare vCenter Converter, we can split the disks to separate VMDK files. This can be achieved when converting the machine from a physical machine to a virtual machine or a virtual machine to a new virtual machine.

- On the Options page, click Edit in the 'Data to copy' section

- Click Advanced

- Click Destination Layout

- Suggest changing the drives to 'Thin'

- Click 'Add disk', select the disk you want to split, and click 'Move down' until it is listed under the new disk

- Complete the job wizard and run the conversion job

So this is kind of a silly question, but I'm curious if there is a downside to enabling the setting to splitting vmware images into 2GB chunks.

I've never methodically backed up my VMs because they never had critical data on them and if something went haywire, I could simply make a new one. However, I now have a few different VMs for which I'd rather not have to rebuild from scratch that are a mixture of Ubuntu, WinXP and Win7. They are mostly used for single-use purposes such as testing IE6 bullshit or holding an MS SQL database.

So, does splitting them into 2GB chunks make TM backups feasible (or even manual backups of just the changed chunks), or does regular use change data on each chunk resulting in essentially splitting the baby* for no real gain in backup efficiency?

Also, any reports of catching shit on fire would be helpful.

* Binary diffs > Wisdom of Solomon

I've never methodically backed up my VMs because they never had critical data on them and if something went haywire, I could simply make a new one. However, I now have a few different VMs for which I'd rather not have to rebuild from scratch that are a mixture of Ubuntu, WinXP and Win7. They are mostly used for single-use purposes such as testing IE6 bullshit or holding an MS SQL database.

So, does splitting them into 2GB chunks make TM backups feasible (or even manual backups of just the changed chunks), or does regular use change data on each chunk resulting in essentially splitting the baby* for no real gain in backup efficiency?

Also, any reports of catching shit on fire would be helpful.

* Binary diffs > Wisdom of Solomon